Introduction

Periodically, enterprises are confronted with claims that a particular technological development has become unavoidable. In recent years, AI agents have been positioned as the next such inevitability, systems expected to reason, act, and orchestrate work across company boundaries. This narrative has generated both interest and uncertainty, particularly among organizations that have recently invested in classical automation, optimization software, or decision-support systems. The resulting question is whether AI agents represent genuine added value or merely another cycle of technological fashion.

For most enterprise contexts, the answer to whether AI agents are needed is affirmative. This conclusion does not arise from novelty, competitive pressure, or expectations of full automation, but from the structural characteristics of modern enterprise operations. Contemporary organizations increasingly operate within environments shaped by unstructured information, legacy systems, fragmented workflows, and decision-making processes distributed across multiple tools and stakeholders. In such settings, appropriately designed agent-based systems can provide measurable operational advantages.

Exceptions do exist. In certain simpler cases, business requirements could be solved or addressed through classical statistical or predictive methods. However, such cases represent a minority of enterprise processes.

Empirical Study of Measuring Agents in Production

Empirical support for this perspective is provided by the study Measuring Agents in Production (Zhou et al., 2025). This is a comprehensive large-scale analysis of deployed AI agents across industries. Based on hundreds of real-world implementations, the study demonstrates that AI agents are already in widespread use.

More than 70% of production agents rely on off-the-shelf foundation models without fine-tuning, suggesting that for a large number of companies, model alone is not the primary indicator of value. Approximately 68% of agent workflows consist of fewer than ten reasoning or action steps, reflecting a preference for bounded and predictable behavior. Furthermore, 74% of deployed agents incorporate human-in-the-loop verification, indicating that the priority is given to accountability and operational reliability. Also this could also indicate lack of full confidence or, at least, the need to keep humans at least partially in control of the processes.

However, research basically shows that main reasons for using AI in general are still: Increasing productivity, Reducing manual work, and Automating repetitive tasks.

Differences Between Academic and Industrial Understanding of AI Agents

The concept of an artificial intelligence agent has already established meaning and understanding in academic literature. In formal academic definition it is typically mentioned as an entity that perceives its environment and acts upon it in pursuit of defined objectives. Canonical formulations focuses on properties such as autonomy, environment interaction, policy execution, and, in many cases, learning through optimization or reward mechanisms (Russell & Norvig, 2021; Wooldridge, 2009).

In contrast, contemporary industrial usage of the term agent has evolved in response to practical deployment needs. This is becoming more visible with the emergence of LLM tools among other developments in this field. Agents are commonly described as systems that orchestrate workflows, invoke external tools, and execute bounded sequences of tasks, often under human supervision. Documentation from widely used frameworks characterizes agents primarily in terms of tool selection, task decomposition, and operational integration, rather than formal autonomy or learning dynamics (LangChain, 2024; Microsoft, 2024).

This difference does not imply conceptual misunderstanding in a way that somebody should “choose a side” and that “one side is right and other is wrong”, but rather reflects differing evaluative priorities. Academic terminology prioritizes theoretical and formal guarantees, whereas industrial practice prioritizes reliability, controllability, and integration within existing systems. Recognizing this interpretive shift is essential for avoiding miscommunication and for accurately situating agent-based systems within enterprise decision-making.

Conceptual Ambiguity and the Octant Division of Understanding

Discussions on AI agents are often complicated by conceptual ambiguity. The term agent is used inconsistently across academic research and industrial practice, frequently hiding substantial differences in system behavior, autonomy, and learning capability.

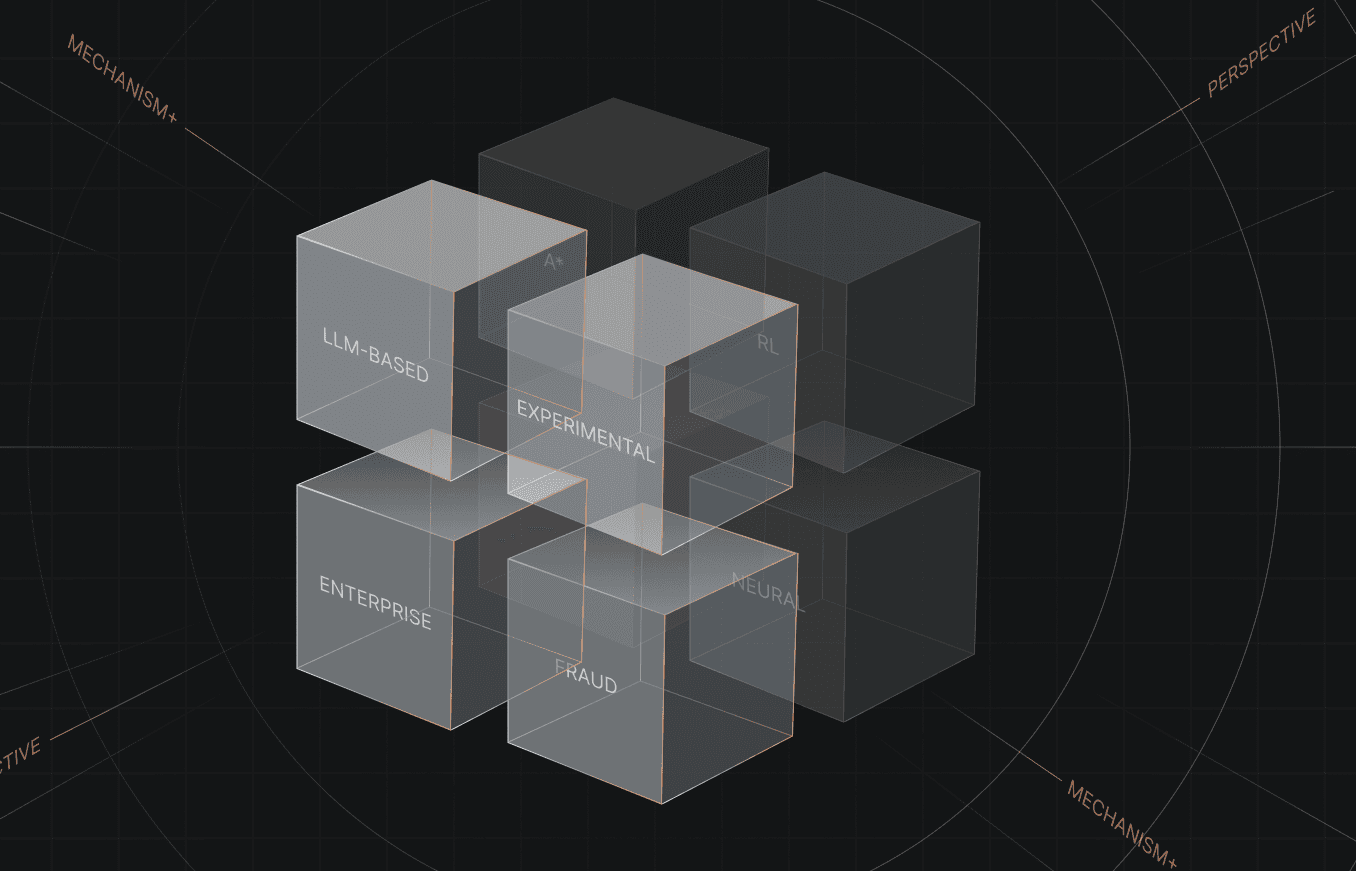

To address this ambiguity, a three-dimensional “space of understanding” is proposed. This space distinguishes AI systems along three axes: academic versus industrial interpretation, agentic versus non-agentic behavior, and learning versus non-learning mechanisms. Together, these axes define a three-dimensional conceptual space in which each configuration occupies a distinct octant. This octant-based view clarifies how systems labeled as AI agents differ in scope, capability, and risk profile.

The AI Systems Cube as an Octant-Based Interpretation of Agentic Systems

To fully resolve the ambiguity surrounding the term AI agent, it is insufficient to describe the underlying dimensions in isolation. Their combined effect defines a structured conceptual space that can be represented as a three-dimensional cube. Each axis captures a fundamental distinction that shapes how AI systems are designed, evaluated, and discussed.

The three axes are defined as follows: agentic versus non-agentic systems; learning versus non-learning systems; and academic versus industrial interpretations. Together, these axes define a three-dimensional cube of AI system interpretations, where each corner, or octant, corresponds to a distinct class of systems. Importantly, systems located in different octants may all be described using the same term AI agent, despite exhibiting fundamentally different properties. The octant framework distinguishes AI systems not by algorithms alone, but by the role they play, the context in which they are evaluated, and the criteria by which they are judged. This distinction is particularly important when comparing academic and industrial perspectives, where identical technical models may occupy different octants depending on their purpose and use.

Contextual Distinction Between Academic and Industrial Perspectives

From an academic perspective, AI systems are primarily evaluated as objects of study. The central focus lies on learning behavior, optimization properties, generalization performance, and theoretical guarantees. Success is typically measured using metrics such as accuracy, loss, convergence, or sample efficiency, and systems are often assessed in isolation from broader organizational processes.

In contrast, industrial AI systems are evaluated as components of operational workflows. Here, the primary concern is not the model itself, but how its outputs are integrated into decision-making processes. Industrial systems are judged by their impact on business outcomes, reliability, auditability, and their ability to function within constraints such as governance, regulation, and human oversight. As a result, the same learning model may be considered academic when evaluated in isolation, but industrial when deployed as part of a production system.

Examples Across the Eight Octants

Octant 1: Academic – Agentic – Learning

Example: A reinforcement learning agent trained in simulation to navigate an environment or optimize a policy. Description: The system acts in an environment and continuously improves its behavior based on feedback.

Octant 2: Academic – Agentic – Non-Learning

Example: A classical planning agent using fixed rules or search strategies such as A*. Description: The agent performs actions to reach a goal but does not adapt its behavior through learning.

Octant 3: Academic – Non-Agentic – Learning

Example: A neural network trained for image classification or regression in an experimental setting. Description: The system learns from data but does not initiate actions or interact with an environment.

Octant 4: Academic – Non-Agentic – Non-Learning

Example: A rule-based expert system or a static decision tree. Description: The system neither learns nor acts autonomously, it performs deterministic symbolic reasoning.

Octant 5: Industrial – Agentic – Learning

Example: An experimental optimization agent that adapts scheduling or logistics strategies based on performance feedback. Description: The system acts within enterprise workflows and updates its behavior over time, raising governance and safety considerations.

Octant 6: Industrial – Agentic – Non-Learning

Example: An LLM-based support agent that retrieves information, invokes tools, and escalates cases to human operators. Description: The system performs multi-step actions within workflows but operates with fixed model parameters.

Octant 7: Industrial – Non-Agentic – Learning

Example: A fraud detection or demand forecasting model deployed through an MLOps pipeline. Description: The model learns from historical enterprise data but does not initiate actions; its outputs inform decisions made elsewhere in the organization.

Octant 8: Industrial – Non-Agentic – Non-Learning

Example: A basic chatbot or OCR system used for document processing. Description: The system provides static input–output mapping without learning or autonomous action.

Decision-Making Implications

The octant based clarification of understanding provides a structured basis for enterprise decision-making. It supports clearer distinctions between cases where agentic systems are appropriate and those where classical automation or predictive modeling offer greater stability and cost efficiency. Effective selection depends on realistic assessment of business processes, data maturity, and required levels of autonomy, rather than on technological novelty.

Conclusion

AI agents constitute a meaningful element of modern enterprise system architectures when applied with proportional scope and clear intent. Their value lies not in maximal autonomy, but in appropriately constrained intelligence aligned with organizational needs. The cube-based octant framework, supported by empirical insights from the MAP study, provides a conceptual foundation for navigating the diversity of systems currently described as AI agents.

A central contribution of this work is the explicit clarification of how the term agent is interpreted differently across academic and industrial contexts. In academic discourse, agenthood is primarily associated with formal models of autonomy, environment interaction, and policy-driven behavior, often coupled with learning and optimization. In industrial practice, the same term is more frequently used to denote systems that coordinate workflows, invoke tools, and assist human operators within bounded and controllable processes. Recognizing this distinction does not weaken either perspective but rather, it enables more precise communication, more appropriate system design, and more informed decision-making regarding the role of agentic systems in enterprise environments.

References

Zhou, X., et al. (2025). Measuring agents in production: An empirical study of agent-based systems in real-world deployments. arXiv preprint arXiv:2512.04123. https://arxiv.org/abs/2512.04123

Russell, S. J., & Norvig, P. (2021). Artificial intelligence: A modern approach (4th ed.). Pearson. https://aima.cs.berkeley.edu/

Wooldridge, M. (2009). An introduction to multiagent systems (2nd ed.). Wiley.

LangChain. (2024). Agents documentation. https://docs.langchain.com/oss/javascript/langchain/agents

Microsoft. (2024). Copilot Studio documentation.